Speed as a product feature

I’ve been reading the incredible book In the Plex: How Google Thinks, Works, and Shapes Our Lives which is widely acknowledged as the best account of the inner workings of Google.

One of the many fascinating insights is Google’s obsession with speed.

The thing about speed is it’s unlike almost every other product feature, because it involves taking other things away. One of the reasons Google is able to achieve such low latency on its home page is the simplicity of it - there’s a search box and little else.

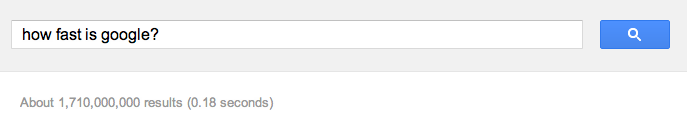

Also, note how the speed of the response is next to the search results, almost like a badge of honour.

Acceptable speeds

Larry Page considers latency above 400 milliseconds as unacceptable, and product teams breaching this upper limit are penalised by taking away resources or perks until they make improvements.

The sort of latency we should be aiming for in an ideal world is below 100 milliseconds because most people perceive this as instantaneous.

However, most web developers have been content with pages loading in one or two seconds. But in cases where interactions are frequent, as is the case with Google search, this level of latency would seriously undermine engagement with the product.

Why latency is bad

Here are some specific arguments against latency:

- When the delay is noticeable people begin thinking about other things, which breaks the seamless interaction with the product or service.

- People think the product is dumb or stupid, which is a bad attribute to have connected with a product. Most likely they will lose patience with the product and give up on it all together.

The iPhone 3GS

When Apple launched the iPhone 3GS, much of the marketing revolved around the fact that it was faster than the previous model.

Speed is something people instinctively understand, so it makes sense for Apple to position the iPhone in this way. It also highlights just how desirable speed can be to consumers. It is the marketing message behind every new desktop CPU.

Embedded system challenges

This obsession with speed can cause real problems in some applications. For a web service the speed can be boosted by increasing the number of servers. This is harder with embedded systems, where the device is what it is. There is no easy way to bolt on extra processing power without replacing the device.

This is beginning to change with the advent of cloud computing. For instance, most of the processing behind the Siri service happens in the cloud. A smart phone would be unable to provide such a service otherwise.

Even without the boost provided by cloud computing, there are still some useful heuristics which can help us design fast systems:

- Start with the fastest processor you can. This might sound obvious, but don’t penalise yourself unnecessarily.

- Understand customer expectations early on. If the customer expects a task to take one second, and you have already chosen a platform which takes 10 seconds, then there’s no way you’ll be able to optimise it well enough to meet expectations.

- Cheat in every way possible. For instance, by caching content, pre-fetching content, keeping look-up tables of expensive computations etc.

- Give good feedback. If the customer’s expectation is set properly, then the delay is more bearable. For instance, by using progress bars.

Difficult product trade-offs

Often speed is a trade-off, and not a straightforward one. For instance, increasing security decreases speed because it increases computational overhead. So do we want a faster system or a more secure one? Or is there a way to solve this contradiction?

Other examples are speed versus power consumption. How large and power hungry are we happy for our devices to be?

Conclusions

It’s clear that speed is a fascinating area for designers to consider, and one which can make or break a product.